Synapse AI

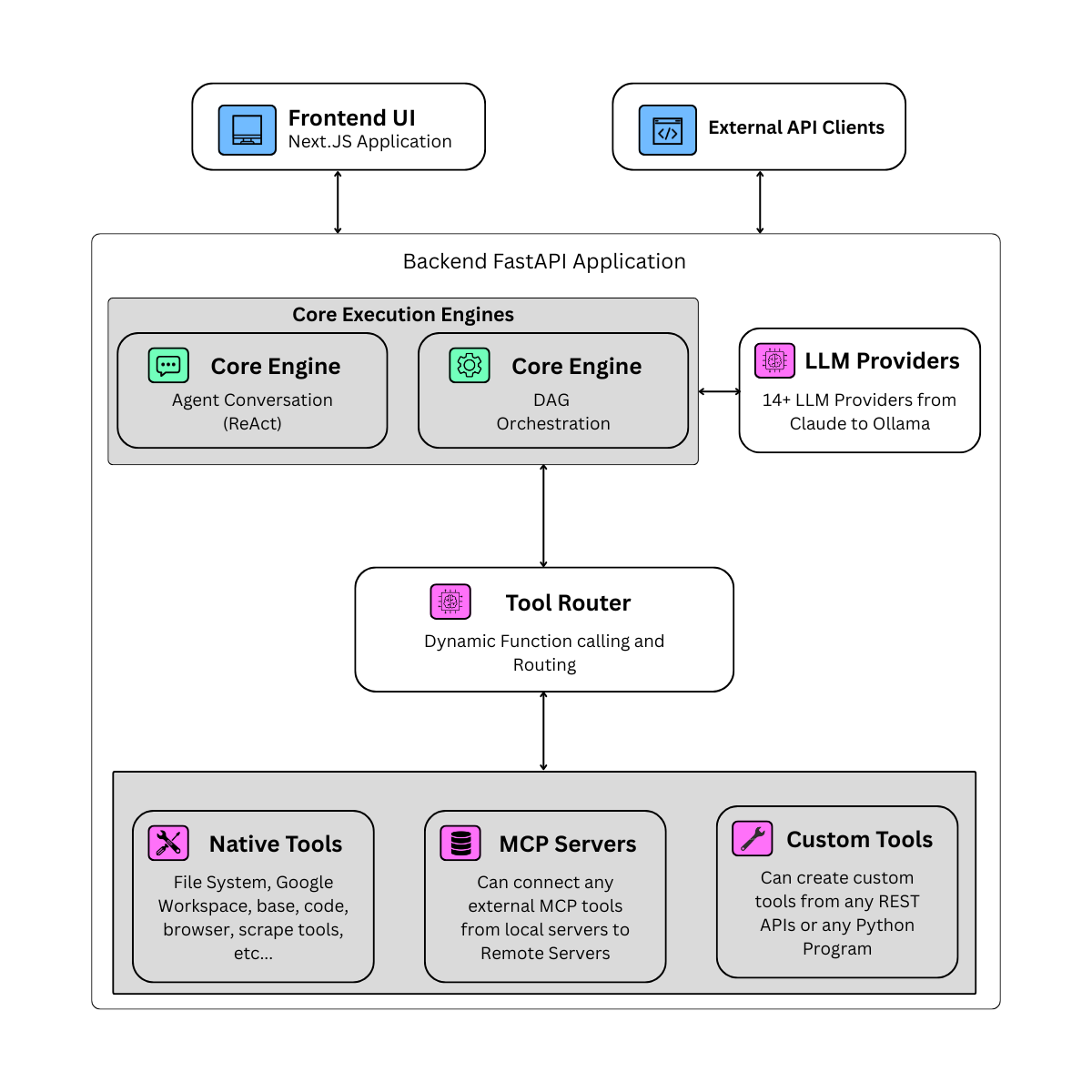

Synapse AI is a production-grade, open-source multi-agent orchestration platform for building, connecting, and executing AI agents powered by any LLM — local or cloud. It converts existing APIs and Python programs into agent tools, orchestrates them into deterministic end-to-end workflows, and exposes everything via a REST API — without vendor lock-in.

What can you build with Synapse?

- Agentic pipelines — Research → summarise → send report → await human approval → publish

- Customer-facing AI — Chat assistants connected to your database, docs, and internal APIs

- Scheduled automation — Cron jobs that run agents against live data every morning

- Internal tooling — Agents that query your code repos, databases, Jira, and Slack in one shot

- API-first AI — POST to

/api/v1/chatand stream back structured responses

Architecture

Key concepts

| Concept | Description |

|---|---|

| Agent | An AI assistant with a system prompt, tool subset, and optional model override. Runs in a ReAct loop. |

| Orchestration | A DAG (directed acyclic graph) of steps. Each step can be an agent call, LLM call, tool call, branch, loop, or human gate. |

| Tool | A function an agent can call — native (Sandbox, Vault, SQL…), MCP server, or custom REST/Python. |

| MCP Server | A local or remote process exposing tools via the Model Context Protocol. |

| Vault | Persistent file storage accessible to agents across sessions. |

| Session | Conversation context scoped to a session_id. Agents remember prior turns. |

| Schedule | Interval or cron trigger that runs an agent or orchestration automatically. |

What makes Synapse different

Cut costs without cutting quality

Run a different model at every step. Use a fast, cheap model for routing and classification; switch to a powerful model only for the steps that need it. One orchestration, many models — you control exactly where the compute goes.

Workflows that do exactly what you designed

Orchestrations are strict DAGs. Execution follows the exact path you defined — no surprises, no hallucinated detours. For steps where the next action is already known (fetch this, parse that, write here), use Tool and LLM steps instead of full agents: zero reasoning overhead, deterministic output, and far cheaper to run.

Turn anything into a tool

Your existing systems are already the capability — Synapse just makes them available to agents:

- Any Python program → drop it in, it becomes a sandboxed agent tool

- Any REST API or webhook → describe its schema, agents call it natively

- Any MCP server → local subprocess or remote HTTP, connected in seconds

- Any orchestration → promote it to an agent; chain orchestrations like functions

Never blocked on a human decision

Human steps pause execution mid-workflow and wait. When the person responds — via the UI, Slack, Telegram, or any connected messaging channel — the run resumes exactly where it left off. No polling, no timeouts you didn't set.

Run it anywhere, own your data

Full local operation with Ollama. Or mix: local models for some agents, cloud APIs for others. No vendor lock-in on models, no data sent anywhere you didn't choose.

Capabilities at a glance

| Capability | Detail |

|---|---|

| Multi-model orchestrations | Per-step model override — mix Gemini Flash, Claude Opus, and local Ollama in one workflow |

| Orchestrations as agents | Promote any orchestration to an agent; nest pipelines inside pipelines |

| Deterministic Tool steps | Skip the ReAct loop entirely — call a specific tool directly with state values |

| Resumable human gates | Human steps survive server restarts; runs pick up exactly where they paused |

| Docker-sandboxed Python | Agents write and execute Python in an isolated container — safe by default |

| Cost limits per run | Set a max-spend per orchestration run — execution halts if the budget is hit |

| 5 messaging platforms | Slack, Discord, Telegram, Teams, WhatsApp — with per-channel agent binding |

| 14+ LLM providers | Cloud, local, and CLI providers; no API key needed for Claude/Gemini/Codex CLI |

| Import/Export packs | Portable bundles of agents + orchestrations + MCP configs; 3 curated starter packs |

| AI Builder | Chat with a meta-agent that designs and materializes orchestrations for you |

Quick links

- Install Synapse — one-command setup for macOS, Linux, Windows, Docker

- Quick Start — create your first agent and chat in 5 minutes

- Building Agents — configure system prompts, tools, and model overrides

- Orchestrations — build multi-step AI pipelines

- REST API Reference — integrate Synapse into your application

- GitHub →